社区日报 第75期 (2017-10-20)

1、spring boot 整合 elasticsearch 5.x实现

http://t.cn/ROdYijN

2、基于Elasticsearch搜索平台设计及踩坑教训

http://t.cn/ROdYCun

3、深度剖析倒排索引原理

http://t.cn/RyUOW4X

编辑:laoyang360

归档:https://elasticsearch.cn/publish/article/324

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/ROdYijN

2、基于Elasticsearch搜索平台设计及踩坑教训

http://t.cn/ROdYCun

3、深度剖析倒排索引原理

http://t.cn/RyUOW4X

编辑:laoyang360

归档:https://elasticsearch.cn/publish/article/324

订阅:https://tinyletter.com/elastic-daily

1、spring boot 整合 elasticsearch 5.x实现

http://t.cn/ROdYijN

2、基于Elasticsearch搜索平台设计及踩坑教训

http://t.cn/ROdYCun

3、深度剖析倒排索引原理

http://t.cn/RyUOW4X

编辑:laoyang360

归档:https://elasticsearch.cn/publish/article/324

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

http://t.cn/ROdYijN

2、基于Elasticsearch搜索平台设计及踩坑教训

http://t.cn/ROdYCun

3、深度剖析倒排索引原理

http://t.cn/RyUOW4X

编辑:laoyang360

归档:https://elasticsearch.cn/publish/article/324

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

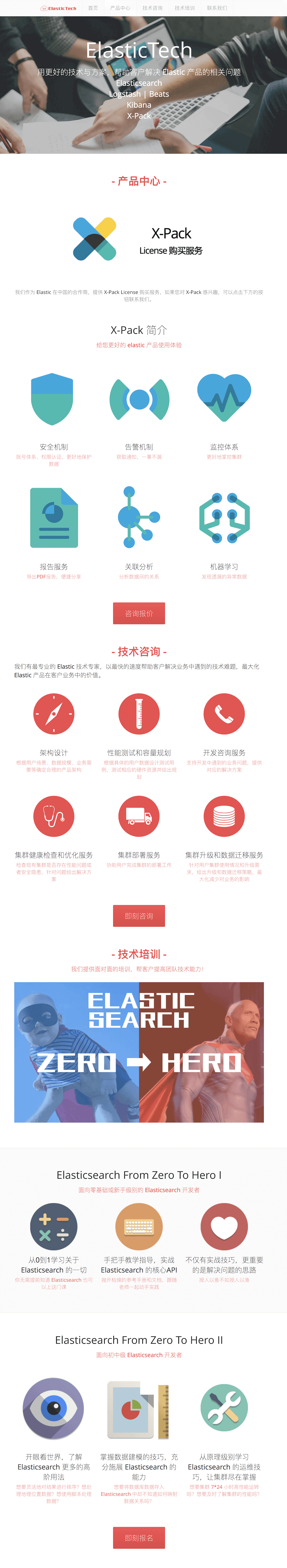

Elastic 中国 Partner 的网站 elastictech.cn 上线了!对 X-Pack 付费功能感兴趣的可以聊起来了!

上海普翔信息科技发展有限公司是 Elastic 在中国的 Partner ,负责 X-Pack 的销售、咨询服务,今天上线了业务网站 http://elastictech.cn 。

从官网介绍可以看出,主要业务都是和 Elastic 相关的,分别是:

[size=18]对X-Pack 刚兴趣的可以去留言咨询了!当然也可以私信和我沟通哦![/size]

继续阅读 »

从官网介绍可以看出,主要业务都是和 Elastic 相关的,分别是:

- X-Pack License 购买服务

- Elastic 本地咨询服务

- Elastic 本地培训服务

[size=18]对X-Pack 刚兴趣的可以去留言咨询了!当然也可以私信和我沟通哦![/size]

上海普翔信息科技发展有限公司是 Elastic 在中国的 Partner ,负责 X-Pack 的销售、咨询服务,今天上线了业务网站 http://elastictech.cn 。

从官网介绍可以看出,主要业务都是和 Elastic 相关的,分别是:

[size=18]对X-Pack 刚兴趣的可以去留言咨询了!当然也可以私信和我沟通哦![/size]

收起阅读 »

从官网介绍可以看出,主要业务都是和 Elastic 相关的,分别是:

- X-Pack License 购买服务

- Elastic 本地咨询服务

- Elastic 本地培训服务

[size=18]对X-Pack 刚兴趣的可以去留言咨询了!当然也可以私信和我沟通哦![/size]

收起阅读 »

elastictech 发表于 : 2017-10-19 17:44

评论 (0)

社区日报 第74期 (2017-10-19)

1.yahoo开源的低延迟大数据引擎vespa和lucece的对比

http://t.cn/ROg0Fs2

2.如何在高可用的elasticstack上部署和扩展logstash

http://t.cn/ROgOwOC

3.Elasticsearch 5.x 源码分析-Shard Allocation 和Cluster Reroute

http://t.cn/ROgO4fF

活动预告:Elastic 长沙交流会

https://elasticsearch.cn/article/320

编辑:金桥

归档:https://elasticsearch.cn/article/321

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/ROg0Fs2

2.如何在高可用的elasticstack上部署和扩展logstash

http://t.cn/ROgOwOC

3.Elasticsearch 5.x 源码分析-Shard Allocation 和Cluster Reroute

http://t.cn/ROgO4fF

活动预告:Elastic 长沙交流会

https://elasticsearch.cn/article/320

编辑:金桥

归档:https://elasticsearch.cn/article/321

订阅:https://tinyletter.com/elastic-daily

1.yahoo开源的低延迟大数据引擎vespa和lucece的对比

http://t.cn/ROg0Fs2

2.如何在高可用的elasticstack上部署和扩展logstash

http://t.cn/ROgOwOC

3.Elasticsearch 5.x 源码分析-Shard Allocation 和Cluster Reroute

http://t.cn/ROgO4fF

活动预告:Elastic 长沙交流会

https://elasticsearch.cn/article/320

编辑:金桥

归档:https://elasticsearch.cn/article/321

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

http://t.cn/ROg0Fs2

2.如何在高可用的elasticstack上部署和扩展logstash

http://t.cn/ROgOwOC

3.Elasticsearch 5.x 源码分析-Shard Allocation 和Cluster Reroute

http://t.cn/ROgO4fF

活动预告:Elastic 长沙交流会

https://elasticsearch.cn/article/320

编辑:金桥

归档:https://elasticsearch.cn/article/321

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

Elastic Meetup 长沙交流会

Elastic Meetup 下半年活动首站位于长沙,是湖南省省会,古称潭州,别名星城,历经三千年城名、城址不变,有“屈贾之乡”、“楚汉名城”、“潇湘洙泗”之称。

主办:

本次活动由 Elastic 与长沙软件园联合举办。

时间:

2017.10.28 下午2:30-5:30

地点:

长沙市岳麓区岳麓大道588号芯城科技园2栋4楼会议室

主题:

- Elastic - Medcl - Elastic Stack 6.0 新功能介绍

- 芒果 TV - 刘波涛 - 芒果日志之旅

- 基于爬虫和 Elasticsearch 快速构建站内搜索引擎

- 闪电分享(5-10分钟,可现场报名)

参会报名:

http://elasticsearch.mikecrm.com/O6o0yq3

武汉、广州、深圳也在筹备中:https://elasticsearch.cn/article/261

关于 Elastic Meetup

Elastic Meetup 由 Elastic 中文社区定期举办的线下交流活动,主要围绕 Elastic 的开源产品(Elasticsearch、Logstash、Kibana 和 Beats)及周边技术,探讨在搜索、数据实时分析、日志分析、安全等领域的实践与应用。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

关于长沙软件园

长沙软件园有限公司成立于2001年,注册资本3000万元人民币,位于长沙高新区麓谷科技新城,是国家科技部批准的国家火炬计划软件产业基地、国家数字媒体技术产业化基地、国家863软件专业孵化器,是国家发改委、信息产业部批准的中部地区唯一的国家软件产业基地。

现有专职的管理和专业技术人员40多人,全部具有大学本科及以上学历,其中硕士和博士学历人员占35%左右,具有中高级职称人员占50%左右,具备丰富的软件行业管理、产业服务和专业技术服务的经历和经验。

从软件园有限公司正式成立以来,先后承担科技部火炬计划项目:“中间件技术公共应用开发平台”和“长沙资源信息管理系统”、“长沙软件园优势领域关键共性技术开发应用平台”;承担了2个国家科技部863项目:“面向网络应用集成的软件支撑环境”、“支持银税类控制设备智能化升级的嵌入式软件平台”,承担了国家火炬计划课题,所有课题均顺利结题。

再次感谢长沙软件园的大力支持!

Elastic Meetup 下半年活动首站位于长沙,是湖南省省会,古称潭州,别名星城,历经三千年城名、城址不变,有“屈贾之乡”、“楚汉名城”、“潇湘洙泗”之称。

主办:

本次活动由 Elastic 与长沙软件园联合举办。

时间:

2017.10.28 下午2:30-5:30

地点:

长沙市岳麓区岳麓大道588号芯城科技园2栋4楼会议室

主题:

- Elastic - Medcl - Elastic Stack 6.0 新功能介绍

- 芒果 TV - 刘波涛 - 芒果日志之旅

- 基于爬虫和 Elasticsearch 快速构建站内搜索引擎

- 闪电分享(5-10分钟,可现场报名)

参会报名:

http://elasticsearch.mikecrm.com/O6o0yq3

武汉、广州、深圳也在筹备中:https://elasticsearch.cn/article/261

关于 Elastic Meetup

Elastic Meetup 由 Elastic 中文社区定期举办的线下交流活动,主要围绕 Elastic 的开源产品(Elasticsearch、Logstash、Kibana 和 Beats)及周边技术,探讨在搜索、数据实时分析、日志分析、安全等领域的实践与应用。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

关于长沙软件园

长沙软件园有限公司成立于2001年,注册资本3000万元人民币,位于长沙高新区麓谷科技新城,是国家科技部批准的国家火炬计划软件产业基地、国家数字媒体技术产业化基地、国家863软件专业孵化器,是国家发改委、信息产业部批准的中部地区唯一的国家软件产业基地。

现有专职的管理和专业技术人员40多人,全部具有大学本科及以上学历,其中硕士和博士学历人员占35%左右,具有中高级职称人员占50%左右,具备丰富的软件行业管理、产业服务和专业技术服务的经历和经验。

从软件园有限公司正式成立以来,先后承担科技部火炬计划项目:“中间件技术公共应用开发平台”和“长沙资源信息管理系统”、“长沙软件园优势领域关键共性技术开发应用平台”;承担了2个国家科技部863项目:“面向网络应用集成的软件支撑环境”、“支持银税类控制设备智能化升级的嵌入式软件平台”,承担了国家火炬计划课题,所有课题均顺利结题。

再次感谢长沙软件园的大力支持! 收起阅读 »

社区日报 第73期 (2017-10-18)

1. 基于 ELKB 搭建实时日志分析平台

http://t.cn/RCeSfaP

2. 通过 Nginx 给 ES 加一个安全的外衣

http://t.cn/ROB4eiQ

3. 来自 db-engines 的 ES 与 Redis对比

http://t.cn/ROBqcYF

编辑:江水

归档:https://elasticsearch.cn/article/319

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/RCeSfaP

2. 通过 Nginx 给 ES 加一个安全的外衣

http://t.cn/ROB4eiQ

3. 来自 db-engines 的 ES 与 Redis对比

http://t.cn/ROBqcYF

编辑:江水

归档:https://elasticsearch.cn/article/319

订阅:https://tinyletter.com/elastic-daily

1. 基于 ELKB 搭建实时日志分析平台

http://t.cn/RCeSfaP

2. 通过 Nginx 给 ES 加一个安全的外衣

http://t.cn/ROB4eiQ

3. 来自 db-engines 的 ES 与 Redis对比

http://t.cn/ROBqcYF

编辑:江水

归档:https://elasticsearch.cn/article/319

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

http://t.cn/RCeSfaP

2. 通过 Nginx 给 ES 加一个安全的外衣

http://t.cn/ROB4eiQ

3. 来自 db-engines 的 ES 与 Redis对比

http://t.cn/ROBqcYF

编辑:江水

归档:https://elasticsearch.cn/article/319

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

社区日报 第72期 (2017-10-17)

1.饕餮盛宴,2017年官方推荐的Elastic十大精彩演讲,涵盖机器学习、X-pack、SQL等内容,值得收藏。

http://t.cn/R0cF6fm

2.升级到kibana5.5.3时你可能需要重点关注的一些内容。

http://t.cn/ROQdl04

3.低成本高回报,使用MapR网关功能复制数据到es并进行全文搜索、可视化显示。

http://t.cn/ROQdHCz

编辑:叮咚光军

归档:https://elasticsearch.cn/article/318

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/R0cF6fm

2.升级到kibana5.5.3时你可能需要重点关注的一些内容。

http://t.cn/ROQdl04

3.低成本高回报,使用MapR网关功能复制数据到es并进行全文搜索、可视化显示。

http://t.cn/ROQdHCz

编辑:叮咚光军

归档:https://elasticsearch.cn/article/318

订阅:https://tinyletter.com/elastic-daily

1.饕餮盛宴,2017年官方推荐的Elastic十大精彩演讲,涵盖机器学习、X-pack、SQL等内容,值得收藏。

http://t.cn/R0cF6fm

2.升级到kibana5.5.3时你可能需要重点关注的一些内容。

http://t.cn/ROQdl04

3.低成本高回报,使用MapR网关功能复制数据到es并进行全文搜索、可视化显示。

http://t.cn/ROQdHCz

编辑:叮咚光军

归档:https://elasticsearch.cn/article/318

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

http://t.cn/R0cF6fm

2.升级到kibana5.5.3时你可能需要重点关注的一些内容。

http://t.cn/ROQdl04

3.低成本高回报,使用MapR网关功能复制数据到es并进行全文搜索、可视化显示。

http://t.cn/ROQdHCz

编辑:叮咚光军

归档:https://elasticsearch.cn/article/318

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

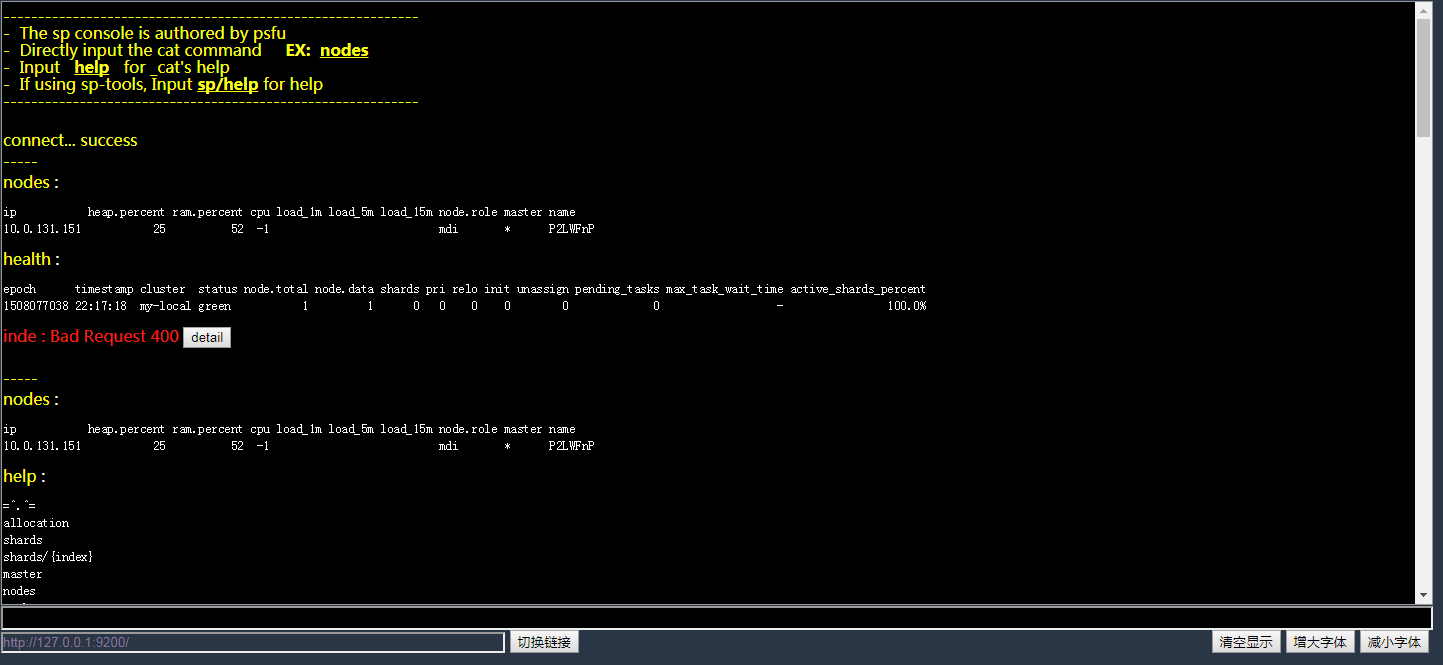

一个仿Linux 控制台的ES的_cat的插件

- 简化_cat使用,可以直接输入 cat 命令 ,可以滚动查看历史结果

- 支持字体放大缩小

- 支持命令历史记录(通过上下方向键来切换 )

- 支持鼠标划取的复制粘贴(暂不复制到剪贴板)

- 安装后在 http://127.0.0.1:9200/_console 使用,也可本地使用:直接访问html文件

GIT 地址

欢迎加 576037940 这个群讨论哈

- 简化_cat使用,可以直接输入 cat 命令 ,可以滚动查看历史结果

- 支持字体放大缩小

- 支持命令历史记录(通过上下方向键来切换 )

- 支持鼠标划取的复制粘贴(暂不复制到剪贴板)

- 安装后在 http://127.0.0.1:9200/_console 使用,也可本地使用:直接访问html文件

GIT 地址

欢迎加 576037940 这个群讨论哈

收起阅读 »

psfu 发表于 : 2017-10-16 10:31

评论 (3)

社区日报 第71期 (2017-10-16)

1.老文新发,讲述百度搜索架构的优化历程,其中很多经验值得在使用es中注意。

http://t.cn/R4wpIvT

2.logTrail,一款kibana 日志可视化展插件。

http://t.cn/RcXglR2

3.斗鱼的elk实践发展史。

http://t.cn/ROHWPdu

编辑:cyberdak

归档:https://elasticsearch.cn/article/316

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/R4wpIvT

2.logTrail,一款kibana 日志可视化展插件。

http://t.cn/RcXglR2

3.斗鱼的elk实践发展史。

http://t.cn/ROHWPdu

编辑:cyberdak

归档:https://elasticsearch.cn/article/316

订阅:https://tinyletter.com/elastic-daily

1.老文新发,讲述百度搜索架构的优化历程,其中很多经验值得在使用es中注意。

http://t.cn/R4wpIvT

2.logTrail,一款kibana 日志可视化展插件。

http://t.cn/RcXglR2

3.斗鱼的elk实践发展史。

http://t.cn/ROHWPdu

编辑:cyberdak

归档:https://elasticsearch.cn/article/316

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

http://t.cn/R4wpIvT

2.logTrail,一款kibana 日志可视化展插件。

http://t.cn/RcXglR2

3.斗鱼的elk实践发展史。

http://t.cn/ROHWPdu

编辑:cyberdak

归档:https://elasticsearch.cn/article/316

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

【翻译】Elasticsearch索引性能优化(2)

本文翻译自QBox官方博客的“Elasticsearch索引性能优化”系列文章中的第二篇,版权归原作者 Adam Vanderbush所有。该系列文章共有三篇,其中第一篇已有同行翻译,参考链接http://www.zcfy.cc/article/how ... .html 后续还会有第三篇的推送,敬请关注。

作者:Adam Vanderbush

译者:杨振涛@vivo

本系列文章重点关注如何最大化地提升elasticsearch的索引吞吐量和降低监控与管理负荷。

Elasticsearch是准实时的,这表示当索引一个文档后,需要等待下一次刷新后就可以搜索到该文档了。

刷新是一个开销较大的操作,这就是为什么默认要设置一个特定的间隔,而不是每索引一个文档就刷新一次。如果想索引大批量的文档,并不需要立刻就搜索到新的索引信息,为了优化索引性能甚至搜索性能,可以临时降低刷新的频率,直到索引操作完成。

一个索引库的分片由多个段组成。Lucene的核心数据结构中,一个段本质上是索引库的一个变更集。这些段是在每次刷新时所创建,随后会在后台合并到一起,以保证资源的高效使用;每个段都会消耗文件句柄、内存和CPU。工作在该场景背后的Lucene负责段的合并,一旦处理不当,可能会消耗昂贵的计算资源并导致Elasticsearch自动降级索引请求到一个单一线程上。

本文将继续关注Elasticsearch的索引性能调优,重点聚焦在集群和索引级别的各种索引配置项设置。

1 关注refresh_interval参数

这个间隔通过参数index.refresh_interval设置,既可以在Elasticsearch配置文件里全局设置,也可以针对每一个索引库单独设置。如果同时设置,索引库设置会覆盖全局配置。默认值是1s,因此最新索引的文档最多不超过1s后即可搜索到。

因为刷新是非常昂贵的操作,提升索引吞吐量的方式之一就是增大refresh_interval;更少的刷新意味着更低的负载,并且更多的资源可以向索引线程倾斜。因此,根据搜索需求,可以考虑设置刷新间隔为大于1秒的值;甚至可以考虑在某些时候,比如执行批量索引时,临时关闭索引库的刷新操作,执行结束后再手动打开。

更新设置API可以在批量索引时动态改变索引以便更加高效,然后再修改为更加实时的索引状态。在批量索引开始前,设置:

curl -XPUT 'localhost:9200/test/_settings' -d '{

"index" : {

"refresh_interval" : "-1"

}

}'如果要做一次较大的批量导入,可以考虑设置index.number_of_replicas: 0来禁止副本。当设置了副本后,整个文档会被发送到副本节点,并重复索引过程;这意味着每个副本都会执行分析、索引及可能的合并操作。反之,如果索引时设置0副本,完成后再打开副本支持,恢复过程实质上只是一个网络字节流传输的过程,这比重复索引过程要高效得多了。

curl -XPUT 'localhost:9200/my_index/_settings' -d ' {

"index" : {

"number_of_replicas" : 0

}

}'然后一旦批量索引完成,即可更新设置(比如恢复成默认设置):

curl -XPUT 'localhost:9200/my_index/_settings' -d '{

"index" : {

"refresh_interval" : "1s"

}

}'并且可以强制触发一次合并:

curl -XPOST 'localhost:9200/my_index/_forcemerge?max_num_segments=5'刷新API支持显式地刷新一个或多个索引库,以便让上次刷新后的所有操作完成并可被搜索感知。实时或近实时能力取决于所使用的索引引擎。比如,内置引擎要求显式调用刷新,而默认地刷新是周期性执行的。

curl -XPOST 'localhost:9200/my_index/_refresh'2 段与合并

段合并是一个计算开销较大的操作,而且会消耗大量的磁盘I/O。由于合并操作比较耗时,尤其是较大的段,所以一般设定为后台执行;这也没什么太大问题,因为大段的合并相对还是比较少的。

但也有时候,合并速率会低于生产速率;一旦如此,Elasticsearch将会自动地限流索引请求到一个单一线程。这能阻止段爆发问题,否则在合并前可能会生成数百个段。

Elasticsearch在这里默认是比较保守的:不希望搜索性能受到后台合并操作的挤兑;但有时(尤其是使用SSD,或写日志的场景)节流限制会过低。

默认的20 MB/s对于传统机械磁盘是一个挺不错的设置;如果使用SSD,可能要考虑加大该设置到100–200 MB/s。

curl -XPUT 'localhost:9200/test/_settings' -d '{

"index" : {

"refresh_interval" : "-1"

}

}'如果正在做批量导入,且根本不介意搜索,就可以彻底关闭合并限流;这样索引操作就会根据磁盘的速率尽可能快地执行:

curl -XPUT 'localhost:9200/_cluster/settings' -d '{

"transient" : {

"indices.store.throttle.type" : "none"

}

}'设置限流类型为none就可以完全关闭合并限流;等批量导入完成后再恢复该配置项为merge。

curl -XPUT 'localhost:9200/_cluster/settings' -d '{

"transient" : {

"indices.store.throttle.type" : "merge"

}

}'注意:上面的设置只适用于Elasticsearch 1.X版本,Elasticsearch 2.X移除了索引级别的速率限制(indices.store.throttle.type、 indices.store.throttle.max_bytes_per_sec、index.store.throttle.type、 index.store.throttle.max_bytes_per_sec),下沉到Lucene的ConcurrentMergeScheduler,以自动管理限流。

合并调度器(ConcurrentMergeScheduler)在需要时会控制合并操作的执行。合并操作运行在独立的线程池中,一旦达到最大线程数,更多的合并请求将会阻塞等待,直到有可用的合并线程。

合并调度器支持下列动态设置:

index.merge.scheduler.max_thread_count

最大线程数默认为 Math.max(1,Math.min(4,Runtime.getRuntime().availableProcessors() / 2)),对于固态硬盘(SSD)工作得很好;如果使用传统机械硬盘,则降低到1。

机械介质在并发I/O方面有较大的时间开销,因此需要减少线程数,以便能按索引并发访问磁盘。该设置允许每次有max_thread_count + 2个线程操作磁盘,所以设置为1表示支持3个线程。

如果使用机械硬盘而不是SSD,就要在elasticsearch配置文件中加入以下配置:

index.merge.scheduler.max_thread_count: 1

当然也可以为单个索引库设置:

curl -XPUT 'localhost:9200/my_index/_settings' -d '{

"index.merge.scheduler.max_thread_count" : 1

}'为所有已创建的索引库设置:

curl -XPUT 'localhost:9200/_settings' -d '{

"index.merge.scheduler.max_thread_count" : 1

}'

3 事务日志的清理

在节点挂掉时事务日志可以防止数据丢失,设计初衷是帮助在flush时原本丢失的分片恢复运行。该日志每5秒,或者在每个索引、删除、更新或批量请求(不管先后顺序)完成时,会提交到磁盘一次。

对Lucene的变更仅会在一次Lucene提交后持久化到磁盘,Lucene提交是比较重量级的操作,索引不能再每个索引或删除操作后就执行。当进程退出或硬件故障时,一次提交后或另一次提交前的变更将会丢失。

为防止这些数据丢失,每个分片有一个事务日志,或者与之关联的预写日志。任何索引或删除操作,在内置的Lucene索引处理完成后都是写到事务日志中。崩溃发生后,就可以从事务日志回放最近的事务来恢复分片。

Elasticsearch的flush操作,本质上是执行了一次Lucene提交并启动了一个新的事务日志;这些都是在后台自动完成的,目的是确保事务日志不会变得过大,否则恢复数据期间的回放操作可能需要消耗相当长的时间。这个功能同样暴露了一个API供调用,虽然很少需要手动触发。

与刷新(refresh)一个索引分片相比,真正昂贵的操作是flush其事务日志(这涉及到Lucene提交)。Elasticsearch基于许多随时可变的定时器来执行flush。通过延迟flush或者彻底关闭flush可以提升索引吞吐量。不过并没有免费的午餐,延迟flush最终实际执行时显然会消耗更长的时间。

下列可动态更新的配置控制着内存缓存刷新到磁盘的频率:

index.translog.flush_threshold_size - 一旦事务日志达到这个值,就会发生一次flush;默认值为512mb。

index.translog.flush_threshold_ops - 在多少操作后执行flush,默认无限制。

index.translog.flush_threshold_period - 触发一次flush前的等待时间,不管日志大小,默认值为30分钟。

index.translog.interval - 检查是否需要flush的时间间隔,随机在该时间到2倍之间取值,默认为5秒。

可以把index.translog.flush_threshold_size的值从默认值的512MB调大比如到1GB,这样在一次flush发生前就可以在日志中积累更大的段。通过构建更大的段,就可以减少flush的次数以及大段的合并次数。所有这些措施加起来就会减少磁盘I/O并获得更好的索引效率。

当然,这需要一定数量的可用堆内存,用于额外的缓存空间,所以调整此类配置时请注意这一点。

4 索引缓冲区的容量规划

索引缓冲区用于存储新的索引文档,如果满了,缓冲区的文档就会写到磁盘上的一个段。节点上所有分片的缓冲区都是独立的。

下列配置项是静态的,并且必须在集群的每个数据节点上都配置:

indices.memory.index_buffer_size - 可设置为百分比或者字节数大小,默认是10%,表示总内存的10%分配给该节点,作为索引缓冲区大小,全局共享。

indices.memory.min_index_buffer_size - 如果index_buffer_size设置为百分比,那么这项配置用于指定一个绝对下限,默认是48MB。

indices.memory.max_index_buffer_size - 如果index_buffer_size设置为百分比,那么这项配置用于指定一个绝对上限,默认是无限制。

配置项indices.memory.index_buffer_size定义了可供索引操作使用的堆内存百分比(剩余堆内存将主要用于检索操作)。如果要索引很多数据,默认的10%可能会太小,有必要调大该值。

5 索引和批量操作的线程池大小

接下来试试在节点级别调大索引和批量操作的线程池大小,看看否带来性能提升。

index - 用于索引和删除操作。线程类型是固定大小的(fixed),默认大小是可用处理器核数,队列大小queue_size是200,该线程池最大为1+可用处理器核数。

bulk - 用于批量操作。线程类型是固定大小的,默认大小是可用处理器核数,队列大小是50,线程池最大为1+可用处理器核数。

单个分片与独立的Lucene是一个层次,因此同时执行索引的并发线程数是有上限的,在Lucene中默认是8,而在ES中可以通过index.index_concurrency配置项来设置。

在为该参数设置默认值时应当多想一想,特别是对于往一个索引库索引数据时,一个节点只有一个分片的情况。

由于索引/批量线程池可以保护和控制并发,所以大部分时候都可以考虑调大默认值;尤其是对于节点上没有其他分片的情况(评估是否值得),可以考虑调大该值。

关于译者

杨振涛

vivo互联网搜索引擎团队负责人,开发经理。10年数据和软件领域经验,先后从事基因测序、电商、IM及厂商互联网领域的系统架构设计和实现。专注于实时分布式系统和大数据的存储、检索和可视化,尤其是搜索引擎及深度学习在NLP方向的应用。技术翻译爱好者,TED Translator,InfoQ中文社区编辑。

未经授权,禁止转载。

英文原文地址:https://qbox.io/blog/maximize- ... art-2

本文翻译自QBox官方博客的“Elasticsearch索引性能优化”系列文章中的第二篇,版权归原作者 Adam Vanderbush所有。该系列文章共有三篇,其中第一篇已有同行翻译,参考链接http://www.zcfy.cc/article/how ... .html 后续还会有第三篇的推送,敬请关注。

作者:Adam Vanderbush

译者:杨振涛@vivo

本系列文章重点关注如何最大化地提升elasticsearch的索引吞吐量和降低监控与管理负荷。

Elasticsearch是准实时的,这表示当索引一个文档后,需要等待下一次刷新后就可以搜索到该文档了。

刷新是一个开销较大的操作,这就是为什么默认要设置一个特定的间隔,而不是每索引一个文档就刷新一次。如果想索引大批量的文档,并不需要立刻就搜索到新的索引信息,为了优化索引性能甚至搜索性能,可以临时降低刷新的频率,直到索引操作完成。

一个索引库的分片由多个段组成。Lucene的核心数据结构中,一个段本质上是索引库的一个变更集。这些段是在每次刷新时所创建,随后会在后台合并到一起,以保证资源的高效使用;每个段都会消耗文件句柄、内存和CPU。工作在该场景背后的Lucene负责段的合并,一旦处理不当,可能会消耗昂贵的计算资源并导致Elasticsearch自动降级索引请求到一个单一线程上。

本文将继续关注Elasticsearch的索引性能调优,重点聚焦在集群和索引级别的各种索引配置项设置。

1 关注refresh_interval参数

这个间隔通过参数index.refresh_interval设置,既可以在Elasticsearch配置文件里全局设置,也可以针对每一个索引库单独设置。如果同时设置,索引库设置会覆盖全局配置。默认值是1s,因此最新索引的文档最多不超过1s后即可搜索到。

因为刷新是非常昂贵的操作,提升索引吞吐量的方式之一就是增大refresh_interval;更少的刷新意味着更低的负载,并且更多的资源可以向索引线程倾斜。因此,根据搜索需求,可以考虑设置刷新间隔为大于1秒的值;甚至可以考虑在某些时候,比如执行批量索引时,临时关闭索引库的刷新操作,执行结束后再手动打开。

更新设置API可以在批量索引时动态改变索引以便更加高效,然后再修改为更加实时的索引状态。在批量索引开始前,设置:

curl -XPUT 'localhost:9200/test/_settings' -d '{

"index" : {

"refresh_interval" : "-1"

}

}'如果要做一次较大的批量导入,可以考虑设置index.number_of_replicas: 0来禁止副本。当设置了副本后,整个文档会被发送到副本节点,并重复索引过程;这意味着每个副本都会执行分析、索引及可能的合并操作。反之,如果索引时设置0副本,完成后再打开副本支持,恢复过程实质上只是一个网络字节流传输的过程,这比重复索引过程要高效得多了。

curl -XPUT 'localhost:9200/my_index/_settings' -d ' {

"index" : {

"number_of_replicas" : 0

}

}'然后一旦批量索引完成,即可更新设置(比如恢复成默认设置):

curl -XPUT 'localhost:9200/my_index/_settings' -d '{

"index" : {

"refresh_interval" : "1s"

}

}'并且可以强制触发一次合并:

curl -XPOST 'localhost:9200/my_index/_forcemerge?max_num_segments=5'刷新API支持显式地刷新一个或多个索引库,以便让上次刷新后的所有操作完成并可被搜索感知。实时或近实时能力取决于所使用的索引引擎。比如,内置引擎要求显式调用刷新,而默认地刷新是周期性执行的。

curl -XPOST 'localhost:9200/my_index/_refresh'2 段与合并

段合并是一个计算开销较大的操作,而且会消耗大量的磁盘I/O。由于合并操作比较耗时,尤其是较大的段,所以一般设定为后台执行;这也没什么太大问题,因为大段的合并相对还是比较少的。

但也有时候,合并速率会低于生产速率;一旦如此,Elasticsearch将会自动地限流索引请求到一个单一线程。这能阻止段爆发问题,否则在合并前可能会生成数百个段。

Elasticsearch在这里默认是比较保守的:不希望搜索性能受到后台合并操作的挤兑;但有时(尤其是使用SSD,或写日志的场景)节流限制会过低。

默认的20 MB/s对于传统机械磁盘是一个挺不错的设置;如果使用SSD,可能要考虑加大该设置到100–200 MB/s。

curl -XPUT 'localhost:9200/test/_settings' -d '{

"index" : {

"refresh_interval" : "-1"

}

}'如果正在做批量导入,且根本不介意搜索,就可以彻底关闭合并限流;这样索引操作就会根据磁盘的速率尽可能快地执行:

curl -XPUT 'localhost:9200/_cluster/settings' -d '{

"transient" : {

"indices.store.throttle.type" : "none"

}

}'设置限流类型为none就可以完全关闭合并限流;等批量导入完成后再恢复该配置项为merge。

curl -XPUT 'localhost:9200/_cluster/settings' -d '{

"transient" : {

"indices.store.throttle.type" : "merge"

}

}'注意:上面的设置只适用于Elasticsearch 1.X版本,Elasticsearch 2.X移除了索引级别的速率限制(indices.store.throttle.type、 indices.store.throttle.max_bytes_per_sec、index.store.throttle.type、 index.store.throttle.max_bytes_per_sec),下沉到Lucene的ConcurrentMergeScheduler,以自动管理限流。

合并调度器(ConcurrentMergeScheduler)在需要时会控制合并操作的执行。合并操作运行在独立的线程池中,一旦达到最大线程数,更多的合并请求将会阻塞等待,直到有可用的合并线程。

合并调度器支持下列动态设置:

index.merge.scheduler.max_thread_count

最大线程数默认为 Math.max(1,Math.min(4,Runtime.getRuntime().availableProcessors() / 2)),对于固态硬盘(SSD)工作得很好;如果使用传统机械硬盘,则降低到1。

机械介质在并发I/O方面有较大的时间开销,因此需要减少线程数,以便能按索引并发访问磁盘。该设置允许每次有max_thread_count + 2个线程操作磁盘,所以设置为1表示支持3个线程。

如果使用机械硬盘而不是SSD,就要在elasticsearch配置文件中加入以下配置:

index.merge.scheduler.max_thread_count: 1

当然也可以为单个索引库设置:

curl -XPUT 'localhost:9200/my_index/_settings' -d '{

"index.merge.scheduler.max_thread_count" : 1

}'为所有已创建的索引库设置:

curl -XPUT 'localhost:9200/_settings' -d '{

"index.merge.scheduler.max_thread_count" : 1

}'

3 事务日志的清理

在节点挂掉时事务日志可以防止数据丢失,设计初衷是帮助在flush时原本丢失的分片恢复运行。该日志每5秒,或者在每个索引、删除、更新或批量请求(不管先后顺序)完成时,会提交到磁盘一次。

对Lucene的变更仅会在一次Lucene提交后持久化到磁盘,Lucene提交是比较重量级的操作,索引不能再每个索引或删除操作后就执行。当进程退出或硬件故障时,一次提交后或另一次提交前的变更将会丢失。

为防止这些数据丢失,每个分片有一个事务日志,或者与之关联的预写日志。任何索引或删除操作,在内置的Lucene索引处理完成后都是写到事务日志中。崩溃发生后,就可以从事务日志回放最近的事务来恢复分片。

Elasticsearch的flush操作,本质上是执行了一次Lucene提交并启动了一个新的事务日志;这些都是在后台自动完成的,目的是确保事务日志不会变得过大,否则恢复数据期间的回放操作可能需要消耗相当长的时间。这个功能同样暴露了一个API供调用,虽然很少需要手动触发。

与刷新(refresh)一个索引分片相比,真正昂贵的操作是flush其事务日志(这涉及到Lucene提交)。Elasticsearch基于许多随时可变的定时器来执行flush。通过延迟flush或者彻底关闭flush可以提升索引吞吐量。不过并没有免费的午餐,延迟flush最终实际执行时显然会消耗更长的时间。

下列可动态更新的配置控制着内存缓存刷新到磁盘的频率:

index.translog.flush_threshold_size - 一旦事务日志达到这个值,就会发生一次flush;默认值为512mb。

index.translog.flush_threshold_ops - 在多少操作后执行flush,默认无限制。

index.translog.flush_threshold_period - 触发一次flush前的等待时间,不管日志大小,默认值为30分钟。

index.translog.interval - 检查是否需要flush的时间间隔,随机在该时间到2倍之间取值,默认为5秒。

可以把index.translog.flush_threshold_size的值从默认值的512MB调大比如到1GB,这样在一次flush发生前就可以在日志中积累更大的段。通过构建更大的段,就可以减少flush的次数以及大段的合并次数。所有这些措施加起来就会减少磁盘I/O并获得更好的索引效率。

当然,这需要一定数量的可用堆内存,用于额外的缓存空间,所以调整此类配置时请注意这一点。

4 索引缓冲区的容量规划

索引缓冲区用于存储新的索引文档,如果满了,缓冲区的文档就会写到磁盘上的一个段。节点上所有分片的缓冲区都是独立的。

下列配置项是静态的,并且必须在集群的每个数据节点上都配置:

indices.memory.index_buffer_size - 可设置为百分比或者字节数大小,默认是10%,表示总内存的10%分配给该节点,作为索引缓冲区大小,全局共享。

indices.memory.min_index_buffer_size - 如果index_buffer_size设置为百分比,那么这项配置用于指定一个绝对下限,默认是48MB。

indices.memory.max_index_buffer_size - 如果index_buffer_size设置为百分比,那么这项配置用于指定一个绝对上限,默认是无限制。

配置项indices.memory.index_buffer_size定义了可供索引操作使用的堆内存百分比(剩余堆内存将主要用于检索操作)。如果要索引很多数据,默认的10%可能会太小,有必要调大该值。

5 索引和批量操作的线程池大小

接下来试试在节点级别调大索引和批量操作的线程池大小,看看否带来性能提升。

index - 用于索引和删除操作。线程类型是固定大小的(fixed),默认大小是可用处理器核数,队列大小queue_size是200,该线程池最大为1+可用处理器核数。

bulk - 用于批量操作。线程类型是固定大小的,默认大小是可用处理器核数,队列大小是50,线程池最大为1+可用处理器核数。

单个分片与独立的Lucene是一个层次,因此同时执行索引的并发线程数是有上限的,在Lucene中默认是8,而在ES中可以通过index.index_concurrency配置项来设置。

在为该参数设置默认值时应当多想一想,特别是对于往一个索引库索引数据时,一个节点只有一个分片的情况。

由于索引/批量线程池可以保护和控制并发,所以大部分时候都可以考虑调大默认值;尤其是对于节点上没有其他分片的情况(评估是否值得),可以考虑调大该值。

关于译者

杨振涛

vivo互联网搜索引擎团队负责人,开发经理。10年数据和软件领域经验,先后从事基因测序、电商、IM及厂商互联网领域的系统架构设计和实现。专注于实时分布式系统和大数据的存储、检索和可视化,尤其是搜索引擎及深度学习在NLP方向的应用。技术翻译爱好者,TED Translator,InfoQ中文社区编辑。

未经授权,禁止转载。

英文原文地址:https://qbox.io/blog/maximize- ... art-2 收起阅读 »

社区日报 第70期 (2017-10-15)

1.(自备梯子)如何在避免停机的情况下远程迁移Elasticsearch集群。

http://t.cn/ROlVXTV

2.(自备梯子)每个软件工程师都应该知道的关于如何改进搜索体验的理论知识。

http://t.cn/Rp0DO48

3.一个在线旅游服务业IT主管讲述Elasticsearch对企业改进搜索和自动数据分析方面的帮助。

http://t.cn/ROWKRkJ

编辑:至尊宝

归档:https://elasticsearch.cn/article/314

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/ROlVXTV

2.(自备梯子)每个软件工程师都应该知道的关于如何改进搜索体验的理论知识。

http://t.cn/Rp0DO48

3.一个在线旅游服务业IT主管讲述Elasticsearch对企业改进搜索和自动数据分析方面的帮助。

http://t.cn/ROWKRkJ

编辑:至尊宝

归档:https://elasticsearch.cn/article/314

订阅:https://tinyletter.com/elastic-daily

1.(自备梯子)如何在避免停机的情况下远程迁移Elasticsearch集群。

http://t.cn/ROlVXTV

2.(自备梯子)每个软件工程师都应该知道的关于如何改进搜索体验的理论知识。

http://t.cn/Rp0DO48

3.一个在线旅游服务业IT主管讲述Elasticsearch对企业改进搜索和自动数据分析方面的帮助。

http://t.cn/ROWKRkJ

编辑:至尊宝

归档:https://elasticsearch.cn/article/314

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

http://t.cn/ROlVXTV

2.(自备梯子)每个软件工程师都应该知道的关于如何改进搜索体验的理论知识。

http://t.cn/Rp0DO48

3.一个在线旅游服务业IT主管讲述Elasticsearch对企业改进搜索和自动数据分析方面的帮助。

http://t.cn/ROWKRkJ

编辑:至尊宝

归档:https://elasticsearch.cn/article/314

订阅:https://tinyletter.com/elastic-daily 收起阅读 »

社区日报 第69期 (2017-10-14)

1、使用nested结构如何进行个性化的排序

http://t.cn/RONaiTA

2、从es2.3.4升级到es5.4.1,性能提升30-40%的案例

http://t.cn/RONXHBr

3、java9在es6上的简单测评报告,喜欢尝鲜的可以试试

http://t.cn/RONC5Fk

编辑:bsll

归档:https://www.elasticsearch.cn/article/313

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/RONaiTA

2、从es2.3.4升级到es5.4.1,性能提升30-40%的案例

http://t.cn/RONXHBr

3、java9在es6上的简单测评报告,喜欢尝鲜的可以试试

http://t.cn/RONC5Fk

编辑:bsll

归档:https://www.elasticsearch.cn/article/313

订阅:https://tinyletter.com/elastic-daily

1、使用nested结构如何进行个性化的排序

http://t.cn/RONaiTA

2、从es2.3.4升级到es5.4.1,性能提升30-40%的案例

http://t.cn/RONXHBr

3、java9在es6上的简单测评报告,喜欢尝鲜的可以试试

http://t.cn/RONC5Fk

编辑:bsll

归档:https://www.elasticsearch.cn/article/313

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

http://t.cn/RONaiTA

2、从es2.3.4升级到es5.4.1,性能提升30-40%的案例

http://t.cn/RONXHBr

3、java9在es6上的简单测评报告,喜欢尝鲜的可以试试

http://t.cn/RONC5Fk

编辑:bsll

归档:https://www.elasticsearch.cn/article/313

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

Elastic与阿里云达成合作伙伴关系 提供 ” 阿里云 Elasticsearch ” 的新服务

新服务发布 -阿里云 Elasticsearch 将包含 Elasticsearch、Kibana 及 Elastic 的 X-Pack 功能

Elastic - 旗下拥有 Elasticsearch,以及使用最广泛的开源产品集合 Elastic Stack,用于解决搜索、日志和数据分析等关键任务型用例 - 今天宣布与阿里巴巴集团(纽约证券交易所代码:BABA,「阿里巴巴」)旗下云计算平台阿里云达成新的合作伙伴关系,旨在共同研发及发布于阿里云上提供托管的 Elasticsearch,为中国市场提供崭新的用户体验。

这项名为 “ 阿里云 Elasticsearch ” 的新服务能让阿里巴巴的客户随心所欲地运用 Elasticsearch 强大的实时搜索、采集及数据分析功能,是一站式而且主导性的解决方案。

阿里云总裁胡晓明先生表示:“作为全球领先的云计算服务提供商,阿里云内致力于通过我们的平台向客户提供最先进的产品,使其保持竞争优势并促进创新。” 他指出:“阿里云 Elasticsearch 将会成为一项高度差异化的服务,因为它运用了 Elastic 先进的搜索产品及强大的 X-Pack 功能,不论在服务的任何层面上,均容易上手使用以及方便管理。”

阿里云 Elasticsearch 现已正式上线,简单配置即可添加到客户的云计算服务之上。通过 Elasticsearch 实时搜索的能力与客户应用相结合,以 Logstash 或 Beats 将数据导入阿里云 Elasticsearch 里,使用 Kibana 仪表板把实时及历史数据可视化,加上 X-Pack 的一系列功能如 security、alerting、monitoring、reporting、Graph 分析及 machine learning,为开发人员提供一站式产品的体验。

此外,阿里云和 Elastic 会着力于技术提升,确保阿里云 Elasticsearch 与时并进,拥有最新的功能。在未来,日志导入功能及其他服务也将相继可用。

Elastic 创始人兼首席执行官 Shay Banon 先生表示:“中国对我们来说是一个不断增长的市场,过去几年间,我们看到 Elasticsearch 的社区版图扩展至超过 5000 多位开发人员。 通过与亚洲最大的云端供应商阿里云合作,并配合 Elasticsearch 的实时处理能力、强大的 X-Pack 功能,如 security,alerting和 machine learning,我们能够一同加快中国广大开发者生态的创新步伐,构建、托管及管理更多不同的应用。”

阿里云总裁胡晓明与 Elastic 创始人兼首席执行官 Shay Banon

了解更多

阿里云 Elasticsearch

Elastic & Alibaba Blog

关于阿里云

阿里云创立于 2009 年, 为阿里巴巴集团旗下云计算业务。现时被 Gartner 评为全球 3 大基础设施即服务 (IaaS) 供货商之一。根据 IDC 2016年调研显示,阿里云为中国最大的公共云端服务供货商,基础设施即服务 (IaaS) 收入全球排行第四。阿里云提供全面的云计算服务,支持世界各地的企业,包括在阿里巴巴集团市场上做生意的商家、初创公司、企业级客户及政府机构等。阿里云现时为国际奥林匹克委员会官方云服务官方合作伙伴。有关更多信息,请访问 https://www.aliyun.com。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

https://www.elastic.co/cn/abou ... cloud

继续阅读 »

Elastic - 旗下拥有 Elasticsearch,以及使用最广泛的开源产品集合 Elastic Stack,用于解决搜索、日志和数据分析等关键任务型用例 - 今天宣布与阿里巴巴集团(纽约证券交易所代码:BABA,「阿里巴巴」)旗下云计算平台阿里云达成新的合作伙伴关系,旨在共同研发及发布于阿里云上提供托管的 Elasticsearch,为中国市场提供崭新的用户体验。

这项名为 “ 阿里云 Elasticsearch ” 的新服务能让阿里巴巴的客户随心所欲地运用 Elasticsearch 强大的实时搜索、采集及数据分析功能,是一站式而且主导性的解决方案。

阿里云总裁胡晓明先生表示:“作为全球领先的云计算服务提供商,阿里云内致力于通过我们的平台向客户提供最先进的产品,使其保持竞争优势并促进创新。” 他指出:“阿里云 Elasticsearch 将会成为一项高度差异化的服务,因为它运用了 Elastic 先进的搜索产品及强大的 X-Pack 功能,不论在服务的任何层面上,均容易上手使用以及方便管理。”

阿里云 Elasticsearch 现已正式上线,简单配置即可添加到客户的云计算服务之上。通过 Elasticsearch 实时搜索的能力与客户应用相结合,以 Logstash 或 Beats 将数据导入阿里云 Elasticsearch 里,使用 Kibana 仪表板把实时及历史数据可视化,加上 X-Pack 的一系列功能如 security、alerting、monitoring、reporting、Graph 分析及 machine learning,为开发人员提供一站式产品的体验。

此外,阿里云和 Elastic 会着力于技术提升,确保阿里云 Elasticsearch 与时并进,拥有最新的功能。在未来,日志导入功能及其他服务也将相继可用。

Elastic 创始人兼首席执行官 Shay Banon 先生表示:“中国对我们来说是一个不断增长的市场,过去几年间,我们看到 Elasticsearch 的社区版图扩展至超过 5000 多位开发人员。 通过与亚洲最大的云端供应商阿里云合作,并配合 Elasticsearch 的实时处理能力、强大的 X-Pack 功能,如 security,alerting和 machine learning,我们能够一同加快中国广大开发者生态的创新步伐,构建、托管及管理更多不同的应用。”

阿里云总裁胡晓明与 Elastic 创始人兼首席执行官 Shay Banon

了解更多

阿里云 Elasticsearch

Elastic & Alibaba Blog

关于阿里云

阿里云创立于 2009 年, 为阿里巴巴集团旗下云计算业务。现时被 Gartner 评为全球 3 大基础设施即服务 (IaaS) 供货商之一。根据 IDC 2016年调研显示,阿里云为中国最大的公共云端服务供货商,基础设施即服务 (IaaS) 收入全球排行第四。阿里云提供全面的云计算服务,支持世界各地的企业,包括在阿里巴巴集团市场上做生意的商家、初创公司、企业级客户及政府机构等。阿里云现时为国际奥林匹克委员会官方云服务官方合作伙伴。有关更多信息,请访问 https://www.aliyun.com。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

https://www.elastic.co/cn/abou ... cloud

新服务发布 -阿里云 Elasticsearch 将包含 Elasticsearch、Kibana 及 Elastic 的 X-Pack 功能

Elastic - 旗下拥有 Elasticsearch,以及使用最广泛的开源产品集合 Elastic Stack,用于解决搜索、日志和数据分析等关键任务型用例 - 今天宣布与阿里巴巴集团(纽约证券交易所代码:BABA,「阿里巴巴」)旗下云计算平台阿里云达成新的合作伙伴关系,旨在共同研发及发布于阿里云上提供托管的 Elasticsearch,为中国市场提供崭新的用户体验。

这项名为 “ 阿里云 Elasticsearch ” 的新服务能让阿里巴巴的客户随心所欲地运用 Elasticsearch 强大的实时搜索、采集及数据分析功能,是一站式而且主导性的解决方案。

阿里云总裁胡晓明先生表示:“作为全球领先的云计算服务提供商,阿里云内致力于通过我们的平台向客户提供最先进的产品,使其保持竞争优势并促进创新。” 他指出:“阿里云 Elasticsearch 将会成为一项高度差异化的服务,因为它运用了 Elastic 先进的搜索产品及强大的 X-Pack 功能,不论在服务的任何层面上,均容易上手使用以及方便管理。”

阿里云 Elasticsearch 现已正式上线,简单配置即可添加到客户的云计算服务之上。通过 Elasticsearch 实时搜索的能力与客户应用相结合,以 Logstash 或 Beats 将数据导入阿里云 Elasticsearch 里,使用 Kibana 仪表板把实时及历史数据可视化,加上 X-Pack 的一系列功能如 security、alerting、monitoring、reporting、Graph 分析及 machine learning,为开发人员提供一站式产品的体验。

此外,阿里云和 Elastic 会着力于技术提升,确保阿里云 Elasticsearch 与时并进,拥有最新的功能。在未来,日志导入功能及其他服务也将相继可用。

Elastic 创始人兼首席执行官 Shay Banon 先生表示:“中国对我们来说是一个不断增长的市场,过去几年间,我们看到 Elasticsearch 的社区版图扩展至超过 5000 多位开发人员。 通过与亚洲最大的云端供应商阿里云合作,并配合 Elasticsearch 的实时处理能力、强大的 X-Pack 功能,如 security,alerting和 machine learning,我们能够一同加快中国广大开发者生态的创新步伐,构建、托管及管理更多不同的应用。”

阿里云总裁胡晓明与 Elastic 创始人兼首席执行官 Shay Banon

了解更多

阿里云 Elasticsearch

Elastic & Alibaba Blog

关于阿里云

阿里云创立于 2009 年, 为阿里巴巴集团旗下云计算业务。现时被 Gartner 评为全球 3 大基础设施即服务 (IaaS) 供货商之一。根据 IDC 2016年调研显示,阿里云为中国最大的公共云端服务供货商,基础设施即服务 (IaaS) 收入全球排行第四。阿里云提供全面的云计算服务,支持世界各地的企业,包括在阿里巴巴集团市场上做生意的商家、初创公司、企业级客户及政府机构等。阿里云现时为国际奥林匹克委员会官方云服务官方合作伙伴。有关更多信息,请访问 https://www.aliyun.com。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

https://www.elastic.co/cn/abou ... cloud 收起阅读 »

Elastic - 旗下拥有 Elasticsearch,以及使用最广泛的开源产品集合 Elastic Stack,用于解决搜索、日志和数据分析等关键任务型用例 - 今天宣布与阿里巴巴集团(纽约证券交易所代码:BABA,「阿里巴巴」)旗下云计算平台阿里云达成新的合作伙伴关系,旨在共同研发及发布于阿里云上提供托管的 Elasticsearch,为中国市场提供崭新的用户体验。

这项名为 “ 阿里云 Elasticsearch ” 的新服务能让阿里巴巴的客户随心所欲地运用 Elasticsearch 强大的实时搜索、采集及数据分析功能,是一站式而且主导性的解决方案。

阿里云总裁胡晓明先生表示:“作为全球领先的云计算服务提供商,阿里云内致力于通过我们的平台向客户提供最先进的产品,使其保持竞争优势并促进创新。” 他指出:“阿里云 Elasticsearch 将会成为一项高度差异化的服务,因为它运用了 Elastic 先进的搜索产品及强大的 X-Pack 功能,不论在服务的任何层面上,均容易上手使用以及方便管理。”

阿里云 Elasticsearch 现已正式上线,简单配置即可添加到客户的云计算服务之上。通过 Elasticsearch 实时搜索的能力与客户应用相结合,以 Logstash 或 Beats 将数据导入阿里云 Elasticsearch 里,使用 Kibana 仪表板把实时及历史数据可视化,加上 X-Pack 的一系列功能如 security、alerting、monitoring、reporting、Graph 分析及 machine learning,为开发人员提供一站式产品的体验。

此外,阿里云和 Elastic 会着力于技术提升,确保阿里云 Elasticsearch 与时并进,拥有最新的功能。在未来,日志导入功能及其他服务也将相继可用。

Elastic 创始人兼首席执行官 Shay Banon 先生表示:“中国对我们来说是一个不断增长的市场,过去几年间,我们看到 Elasticsearch 的社区版图扩展至超过 5000 多位开发人员。 通过与亚洲最大的云端供应商阿里云合作,并配合 Elasticsearch 的实时处理能力、强大的 X-Pack 功能,如 security,alerting和 machine learning,我们能够一同加快中国广大开发者生态的创新步伐,构建、托管及管理更多不同的应用。”

阿里云总裁胡晓明与 Elastic 创始人兼首席执行官 Shay Banon

了解更多

阿里云 Elasticsearch

Elastic & Alibaba Blog

关于阿里云

阿里云创立于 2009 年, 为阿里巴巴集团旗下云计算业务。现时被 Gartner 评为全球 3 大基础设施即服务 (IaaS) 供货商之一。根据 IDC 2016年调研显示,阿里云为中国最大的公共云端服务供货商,基础设施即服务 (IaaS) 收入全球排行第四。阿里云提供全面的云计算服务,支持世界各地的企业,包括在阿里巴巴集团市场上做生意的商家、初创公司、企业级客户及政府机构等。阿里云现时为国际奥林匹克委员会官方云服务官方合作伙伴。有关更多信息,请访问 https://www.aliyun.com。

关于 Elastic

Elastic 通过构建软件,让用户能够实时地、大规模地将数据用于搜索、日志和分析场景。Elastic 创立于 2012 年,相继开发了开源的 Elastic Stack(Elasticsearch、Kibana、Beats 和 Logstash)、X-Pack(商业功能)和 Elastic Cloud(托管服务)。截至目前,累计下载量超过 1.5 亿。Benchmark Capital、Index Ventures 和 NEA 为 Elastic 提供了超过 1 亿美元资金作为支持,Elastic 共有 600 多名员工,分布在 30 个国家/地区。有关更多信息,请访问 elastic.co/cn。

https://www.elastic.co/cn/abou ... cloud 收起阅读 »

【杉岩招聘】 ES研发工程师招聘

岗位职责:

围绕Elasticsearch、Lucene进行优化开发,满足业务系统要求;

优化搜索引擎的性能。

任职要求:

本科以上学历,两年以上开发经验;

熟悉Solr,Elasticsearch等至少一个基于Lucene的开源搜索引擎,研究过Lucene源码优先;

具备处理海量数据的经验者优先;

遇到难题能够持续保持积极,乐观的态度,并最终解决问题;

具有良好的沟通能力和团队合作能力;

有很好的求知欲和创新能力。

简历请发送至: liaoying@szsandstone.com

继续阅读 »

围绕Elasticsearch、Lucene进行优化开发,满足业务系统要求;

优化搜索引擎的性能。

任职要求:

本科以上学历,两年以上开发经验;

熟悉Solr,Elasticsearch等至少一个基于Lucene的开源搜索引擎,研究过Lucene源码优先;

具备处理海量数据的经验者优先;

遇到难题能够持续保持积极,乐观的态度,并最终解决问题;

具有良好的沟通能力和团队合作能力;

有很好的求知欲和创新能力。

简历请发送至: liaoying@szsandstone.com

岗位职责:

围绕Elasticsearch、Lucene进行优化开发,满足业务系统要求;

优化搜索引擎的性能。

任职要求:

本科以上学历,两年以上开发经验;

熟悉Solr,Elasticsearch等至少一个基于Lucene的开源搜索引擎,研究过Lucene源码优先;

具备处理海量数据的经验者优先;

遇到难题能够持续保持积极,乐观的态度,并最终解决问题;

具有良好的沟通能力和团队合作能力;

有很好的求知欲和创新能力。

简历请发送至: liaoying@szsandstone.com 收起阅读 »

围绕Elasticsearch、Lucene进行优化开发,满足业务系统要求;

优化搜索引擎的性能。

任职要求:

本科以上学历,两年以上开发经验;

熟悉Solr,Elasticsearch等至少一个基于Lucene的开源搜索引擎,研究过Lucene源码优先;

具备处理海量数据的经验者优先;

遇到难题能够持续保持积极,乐观的态度,并最终解决问题;

具有良好的沟通能力和团队合作能力;

有很好的求知欲和创新能力。

简历请发送至: liaoying@szsandstone.com 收起阅读 »

社区日报 第68期 (2017-10-13)

1、一个不错的 Elasticsearch API 兼容性的实时监控

http://t.cn/RO5pcz3

2、性能优化,带主键的索引场景性能提升 ~9% !

http://t.cn/RoJex1R

3、比一比 | ES中文分词哪家强?

http://t.cn/ROyBcAB

编辑:laoyang360

归档:https://elasticsearch.cn/article/310

订阅:https://tinyletter.com/elastic-daily

继续阅读 »

http://t.cn/RO5pcz3

2、性能优化,带主键的索引场景性能提升 ~9% !

http://t.cn/RoJex1R

3、比一比 | ES中文分词哪家强?

http://t.cn/ROyBcAB

编辑:laoyang360

归档:https://elasticsearch.cn/article/310

订阅:https://tinyletter.com/elastic-daily

1、一个不错的 Elasticsearch API 兼容性的实时监控

http://t.cn/RO5pcz3

2、性能优化,带主键的索引场景性能提升 ~9% !

http://t.cn/RoJex1R

3、比一比 | ES中文分词哪家强?

http://t.cn/ROyBcAB

编辑:laoyang360

归档:https://elasticsearch.cn/article/310

订阅:https://tinyletter.com/elastic-daily

收起阅读 »

http://t.cn/RO5pcz3

2、性能优化,带主键的索引场景性能提升 ~9% !

http://t.cn/RoJex1R

3、比一比 | ES中文分词哪家强?

http://t.cn/ROyBcAB

编辑:laoyang360

归档:https://elasticsearch.cn/article/310

订阅:https://tinyletter.com/elastic-daily

收起阅读 »